This post was written longhand. Conversion to text was done with MyScript Notes, I did some minor manual edits to correct words and explain a few things (in parentheses).

I see a few people writing about sharing struggles with Large language models, saying we are all still figuring this out.

For me it is easier to do at the moment of a small success.

Stopping a model when it goes off the rails - but automated

The other day I tried to develop an extension for Pi (a coding agent). I want to stop a model when it goes off the rails.

I now have a local model that is fast, can call tools (search, run test, edit code etc.). It does, however, perform some model assisted coding quirks frequently:

- Replace production code that works with throwing an exception_

- Write if statements in tests

- Add fallbacks for things that can’t fail

- Find “problems” in code that works (passes tests + other checks, works for the user etc)

Long term the solution probably is to work in small steps. But these steps come from experience.

Catching the problem when it happens by simply matching some words is a starting point for that: scan for key words in any edits and prompt the user for permission. Just abort when the session is not interactive.

Looks simple, so I let a more powerful but slower local model figure out how to build an extensions. Every Pi session opens with an invitation:

Pi can explain its own features and look up its docs. Ask it how to use or extend Pi.

After some iteration we had a plan and Pi generated a plausible looking extension.

I tested it manually, in Pi. Nothing happened. Back to the drawing board.

I had quite a few iterations, compared with sample code, looked into the Pi API. No luck.

Eventually I installed the sample extension. That worked. Then I deleted most of my extension, added some logging - I could see something.

I learned quite a bit about Pi and its extension mechanism.

It looks like only the last “UI notification” gets shown for any exension point (e.g. a tool call or system startup). I am not yet sure if this is by design or not.

I did take away that, here too, I want to work test-first for parts that do not interface with the agent directly. The feedback loop is just too slow otherwise.

This also required experimentation. I did not want to set up a separate project for an extension that is little more than an idea. But I do want tests. So I asked a model again. The suggestion was to use Deno, because that has testing built in. Some more fiddling followed:

- Get deno to work in the nono sandbox

-

Learn that Pi auto loads any thing in the extensions folder. If you put a test there, Pi crashes. (the test does not have the method that defines an extension. All files in

.pi/agent/extensionsmust have it.) -

Learn that “domain” files also don’t work there. (I wanted to have the extension files thin and the testable functions separate. So that the tests don’t depend on

piand its’ types).

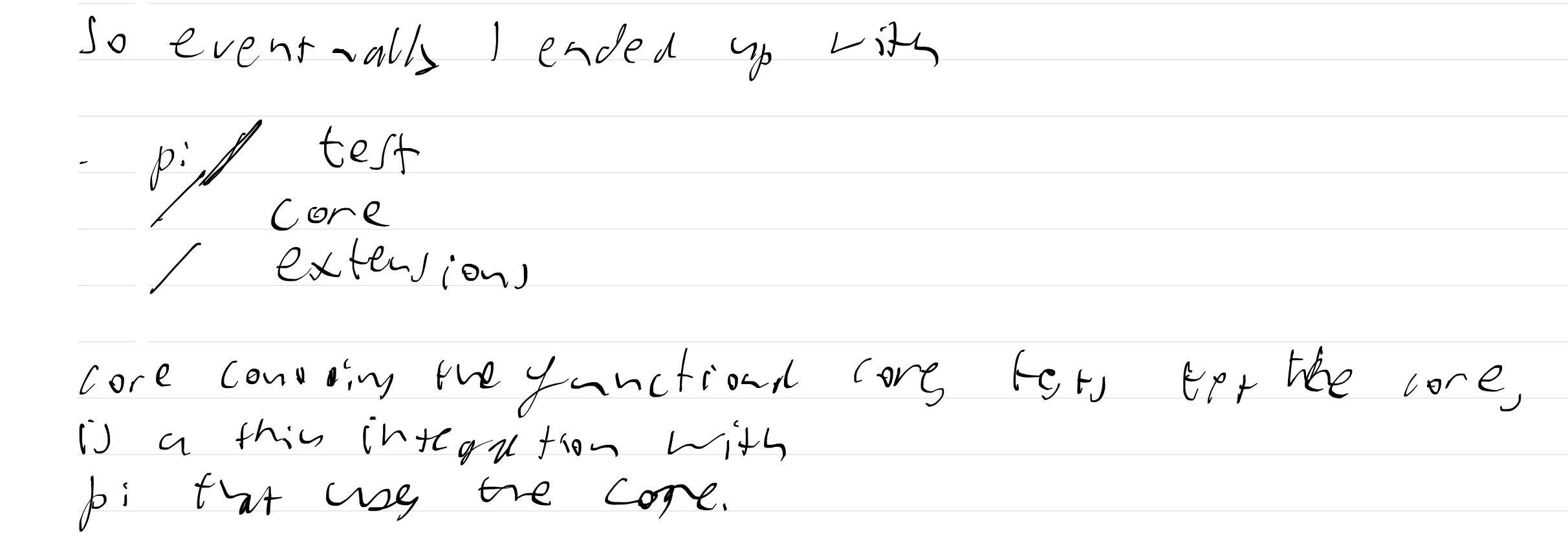

So eventually I ended up with this design

- .pi / test

/ core

/ extensions

Core contains the functional cores, test tests the core. Extensions is a thin integration with Pi that uses the core. (the Deno project lives in )

This was clear enough that the slow, dense model could build a second extension and performance metrics in chat, with relatively little guidance after iterations on a plan.

I haven’t looked at the code yet (in detail). not out of principle, but because it is late, and I want to write down my trial and error before I forget.

Afterword

I hope you enjoyed this slowly written note. I have added the handwritten draft as pdf.

I found writing in long hand helped me slow down and step away from the slot machine that wishcraft can be sometimes.